In an iPaaS, concurrency is the platform’s ability to execute multiple tasks, processes, or workflows simultaneously. This enables integrations to run in parallel, efficiently managing overlapping operations such as concurrent API calls and large data transfers. By dividing workloads into smaller, parallel units, concurrency enhances performance, speeds up processing, and optimizes resource utilization.

Think of concurrency like a highway system. Without it, data moves along a single-lane road, forcing each task to wait for the one ahead, leading to slowdowns and bottlenecks. With concurrency, the system expands into a multi-lane highway, allowing multiple processes to run simultaneously, reducing congestion and improving speed. Just as traffic signals optimize vehicle flow, concurrency ensures efficient resource allocation, keeping integrations running smoothly without unnecessary delays.

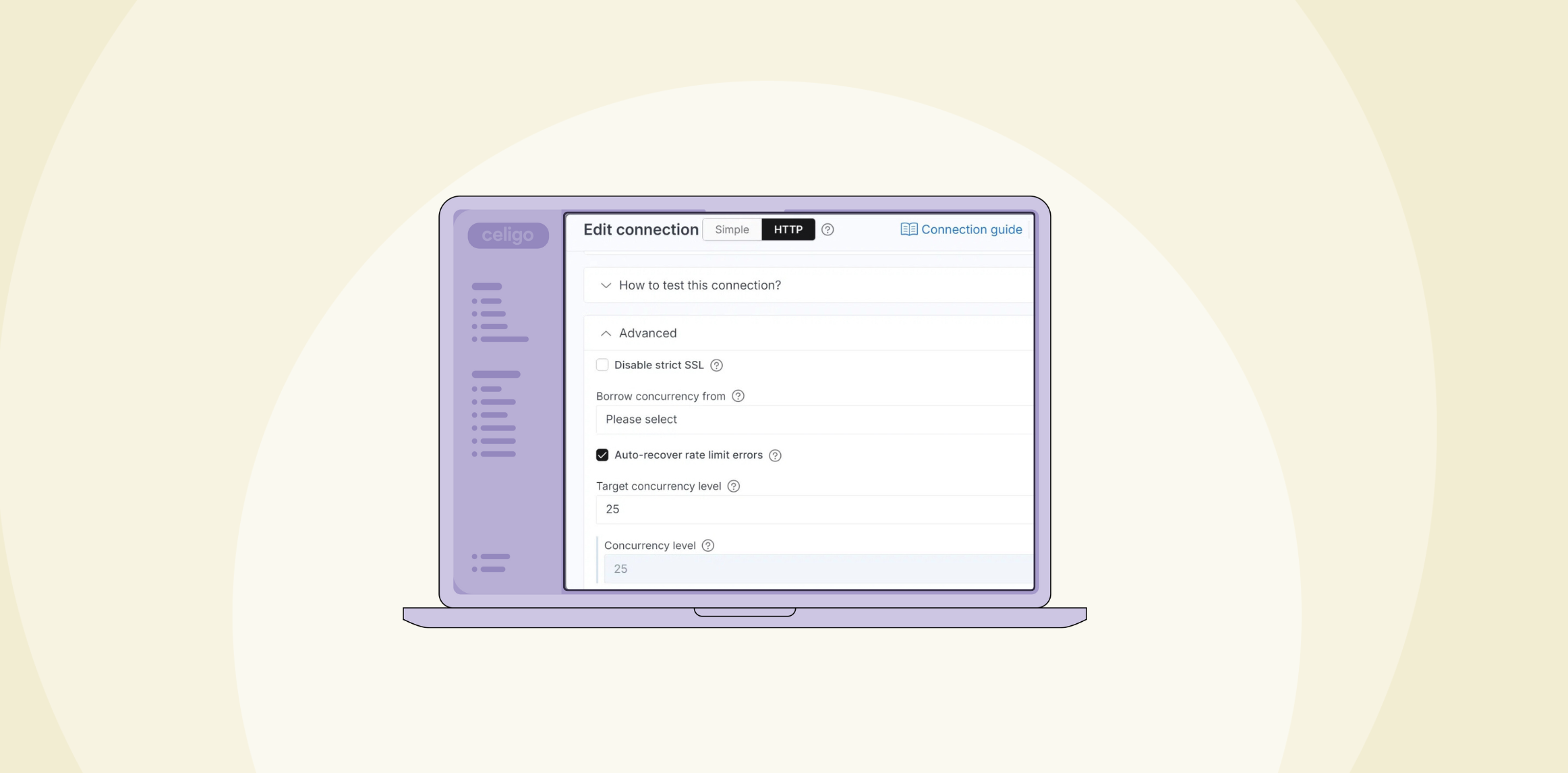

Managing concurrency is critical for high-volume data processing. The right configuration speeds up data synchronization, prevents API throttling, and ensures consistent performance. Poorly managed concurrency, on the other hand, leads to delays, resource limits, and missed SLAs.

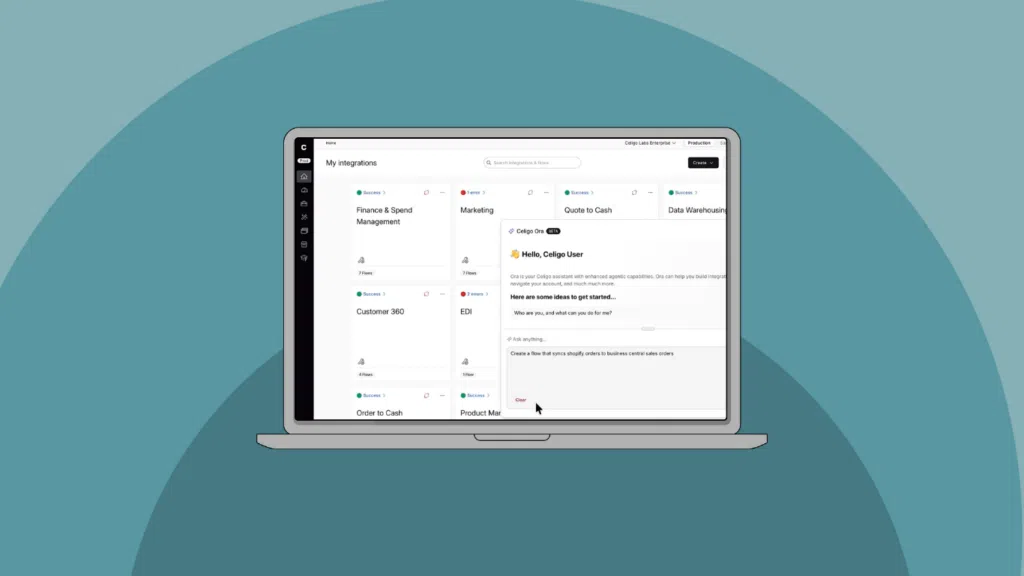

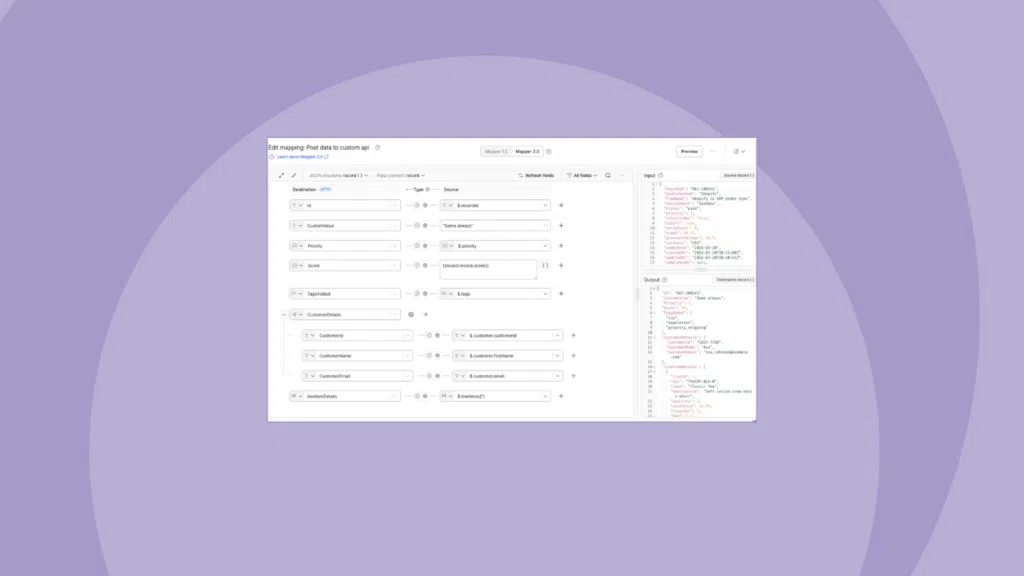

Here, we’ll explore how Celigo’s tools help you configure, monitor, and scale concurrency settings to keep your integrations fast, efficient, and reliable—even under heavy workloads.