MCP security: Key risks and how to stay protected

Enterprise adoption of AI is accelerating, and with it, the rise of agentic automation. AI agents are no longer limited to generating responses. They are executing real business actions across systems, workflows, and data sources. As organizations operationalize LLMs and deploy each AI model into production environments, the underlying communication layer becomes critical.

Model Context Protocol (MCP) is rapidly emerging as that standard layer. It defines how agents connect to tools, invoke workflows, and interact with enterprise systems. But as adoption grows, many organizations are discovering that their existing security posture is not designed for this new model of interaction.

In many cases, early MCP adoption happens inside fast-moving pilot programs, where teams focus on enablement and seamless user experiences before they fully assess the risks introduced by new protocols, external tool access, and server-to-server trust. For many teams, MCP expands faster than governance.

That gap matters because AI agents are changing the role of software in the enterprise. Instead of acting only as interfaces for human users, they increasingly operate as decision-support and execution layers that can retrieve context, interpret prompts, call external tools, and trigger follow-on actions across multiple systems. Once an AI model is connected to MCP servers, what used to be an isolated inference step becomes a live operational transaction path.

When AI agents move from answering questions to invoking enterprise systems, integration governance becomes a security requirement. Security must be architected, not assumed. This means rethinking authentication, authorization, and control at every layer where MCP operates, from the protocols used to expose tools to the servers that deliver capabilities and the workflows that carry sensitive context between systems.

For enterprise architects, the central challenge is not whether MCP can make AI more useful. It is whether MCP can be deployed in a way that is observable, enforceable, and resilient against malicious misuse. In practice, MCP security has to be designed as part of the core architecture.

What is MCP, and why does it matter for enterprise security?

MCP is a specification that standardizes how AI agents discover and invoke tools. In this model, tools represent APIs, workflows, and integrations that connect to enterprise systems and data sources. Rather than treating integrations as passive connectors, MCP turns them into callable capabilities that an AI model can execute on demand.

In practical terms, the protocol gives agents a seamless and more structured way to request access to external functions, retrieve context from business systems, and perform actions through defined interfaces. That shared specification helps MCP scale across teams.

This shift is significant. AI agents powered by LLMs can now use MCP to trigger structured actions such as updating records, initiating transactions, or orchestrating workflows across multiple systems. These actions are not theoretical. They interact directly with CRM platforms, ERP systems, billing engines, and operational tools that support core business processes. As MCP evolves as a specification, it formalizes how clients and servers exchange context, describe tool capabilities, and coordinate execution through shared protocols.

That standardization is helpful for developers and architects because it reduces the need for custom integrations between every AI model and every business application. It creates a more seamless way to connect AI to enterprise tooling.

But it also means attackers can study a known pattern. When a protocol becomes widely adopted, security teams must assume that malicious actors will learn how to exploit weak implementations, misconfigured servers, and overly broad permissions. Those malicious patterns often show up first in smaller MCP deployments.

As a result, MCP expands the attack surface in several ways. First, agents act on behalf of users, which introduces questions around identity, delegation, and trust. Each request carries an ID or identity context that must be validated.

Second, tool calls can trigger real transactions, making every invocation a potential security event. Third, credentials, tokens, and scopes determine what agents can access, placing greater emphasis on authentication and authorization controls. Finally, MCP endpoints and servers themselves become high-value targets because they expose pathways into critical systems and data sources.

The architectural implications are important. In a traditional application stack, human users directly authenticate to applications or APIs. In an MCP environment, the AI model acts as an intermediary between users and the systems they want to reach. It receives context, interprets intent, and then routes actions to external tools through MCP servers. That means the model layer, the protocol layer, the server layer, and the integration layer all become part of the security boundary.

From an architectural perspective, MCP introduces a standardized client-server architecture where AI agents invoke external tools and APIs. In this client-server model, agents function as clients, while MCP servers expose capabilities backed by enterprise systems. This design enables flexibility and scalability, but it also means that security risks extend beyond the AI model into every connected system those agents can reach.

A compromised prompt, a malicious server, or weak authentication on one tool can create downstream exposure across many services. In other words, the risk does not stop at the model. It follows the protocol into the systems of record. That is why MCP needs enterprise controls from the start.

The MCP security threat landscape

These risks are most pronounced in unmanaged or poorly governed MCP deployments. When MCP servers are deployed without centralized governance, consistent authentication controls, or integration monitoring, security gaps emerge quickly.

In many cases, organizations adopt MCP to accelerate AI model deployment without fully addressing how those agents interact with sensitive systems and data sources, increasing the likelihood of malicious breaches.

The common pattern is familiar. A team sets up MCP servers to expose useful business capabilities to an internal assistant, a support bot, or an operational agent. The project succeeds technically because the protocol makes integration relatively seamless. But in the absence of authentication controls and strong governance, those servers may share tokens too broadly, expose too much context, allow dangerous actions by default, or fail to log the activity needed for detection and investigation.

That is where the MCP server risk becomes an enterprise problem. It is also where repeated attacks become more likely.

Authentication and authorization apps

Weak or inconsistent authentication between MCP clients and servers is one of the most common vulnerabilities. Without strong identity verification, malicious actors can exploit gaps in how agents authenticate to MCP server endpoints. If tokens are not properly validated or encrypted, attackers can reuse them to gain access.

A single weak server can undermine the trust model for the broader environment.

Authorization issues compound the problem. If permissions are overly broad or poorly defined, agents may be granted access to systems and actions they do not need. Token passthrough patterns can introduce additional risk, especially in confused deputy scenarios where an agent unknowingly performs actions on behalf of a malicious actor. In these cases, improper authorization and weak authentication create conditions for privilege escalation and downstream breaches.

In enterprise settings, this can happen when a user has limited permissions in one application, but the agent is connected to servers with broader scopes in another. The mismatch in context and access control allows the agent to perform actions the initiating user should not be able to request.

Mitigating these risks requires strict enforcement of permissions, continuous validation of tokens, and ensuring every request is tied to a verified ID. It also requires best practices such as short-lived credentials, strong binding between user identity and tool access, and explicit server-side checks before actions are executed.

Prompt injection and tool injection

Prompt injection attacks represent a growing concern for MCP-enabled workflows. In these scenarios, malicious prompts are designed to manipulate how an AI model interprets instructions, leading it to invoke unintended tools or actions. When LLMs are connected to MCP servers, the impact of prompt injection increases because the agent can execute real operations.

The risk is not limited to obvious attacks. A malicious document, ticket, email, or knowledge base entry can contain hidden instructions that alter how the model interprets context. If the model is allowed to call tools based on that manipulated context, it may leak data, retrieve records from external systems, or trigger harmful actions.

What would once have been a bad answer now becomes a bad transaction. MCP, therefore, turns prompt mistakes into execution risks.

Tool injection extends this concept further. Attackers may attempt to introduce malicious tool definitions or modify existing ones. This can alter how agents interact with MCP server endpoints and data sources.

For example, a malicious tool could silently redirect data to an external system, resulting in data exfiltration. In environments with many servers and loosely curated tool catalogs, malicious tool exposure becomes a serious governance issue.

Mitigating prompt-based attacks requires validating inputs, filtering prompts, constraining tool selection, and ensuring that invocation logic cannot be overridden by untrusted context. Best practices also include limiting what servers can expose, requiring approvals for high-risk actions, and, where possible, separating retrieval context from execution context.

Supply chain risks and malicious MCP servers

MCP introduces supply chain considerations that are often overlooked. Organizations may rely on third-party MCP servers or shared repositories to accelerate development. However, this supply chain introduces risk if those servers are not verified, maintained, and governed according to enterprise standards.

Malicious MCP servers can be designed to mimic legitimate services while extracting sensitive data or injecting harmful logic. If a compromised MCP server is integrated into an environment, the server may expose sensitive data sources or create hidden pathways for data exfiltration.

These types of breaches are difficult to detect because the server appears trusted and the protocol behavior looks normal from the outside. In mature environments, MCP reviews should cover every server dependency.

This is where protocol standardization can create a false sense of safety. A standard specification improves interoperability, but it does not certify trustworthiness. Enterprises still need to validate who operates the servers, how they store context, how they authenticate requests, whether data is encrypted in transit and at rest, and how changes are reviewed.

Mitigating supply chain risk requires validating sources, enforcing signed artifacts, isolating third-party integrations, and monitoring all traffic between MCP clients and external servers.

Unauthorized command execution

In poorly controlled environments, MCP tools may execute commands without sufficient validation. This is particularly risky in non-sandboxed deployments where actions are performed directly against production systems.

Without proper guardrails, an AI model could execute malicious or unintended commands, especially if influenced by compromised prompts or inaccurate context. Attackers can exploit these gaps to trigger unauthorized changes, leading to operational disruptions, harmful configuration changes, or data loss.

For instance, an agent that can update orders, modify account records, or trigger refunds becomes a direct operational risk if its commands are not validated by policy. These attacks can spread quickly across connected systems.

Mitigating this risk requires strict validation layers, execution policies, and isolating sensitive operations behind additional controls. High-impact actions should pass through approval gates, policy checks, or dedicated gateway services that can inspect inputs and enforce business rules before commands reach backend servers.

Exposed credentials and sensitive data

MCP workflows often involve the exchange of credentials, tokens, and identity information. If these elements are not encrypted or properly managed, they can be exposed through logs, misconfigured endpoints, or insecure data flows.

Sensitive data sources may also be inadvertently exposed if permissions are not enforced consistently. For example, logs that capture prompts or responses may include confidential customer details, financial information, or internal ID values if not properly sanitized. These exposures can lead to breaches that extend beyond MCP into other systems, including privacy and compliance incidents.

In the worst cases, the result includes credential theft and data theft.

Mitigating this risk requires encrypting sensitive data, securing token storage, and enforcing strict data handling policies. Teams should review where context is stored, which servers retain request history, and whether external systems receive more information than they need. Privacy should be treated as part of the core design, not as a later compliance check.

Session hijacking

Session management is another critical area of concern. In MCP environments, sessions may span multiple systems and interactions, creating opportunities for interception or misuse. Attackers may attempt to impersonate legitimate users or agents by hijacking active sessions.

If session tokens are not encrypted or properly rotated, they can be reused by malicious actors. Server-Side Request Forgery attacks further complicate this risk by allowing attackers to manipulate how MCP servers process requests. A malicious request can cause a server to reach internal resources, retrieve hidden context, or expose metadata that should never leave trusted boundaries. Similar attacks have been studied across Microsoft ecosystems and other enterprise platforms.

Mitigating session risks requires strong session management practices, including token rotation, encryption, replay protection, and validation of every request. Detection controls also matter here. Security teams need visibility into unusual session reuse, unusual server-to-server patterns, and access attempts from unexpected contexts.

Where MCP security risks appear in enterprise AI workflows

MCP security risks rarely appear in isolation. In enterprise environments, they typically emerge when AI agents invoke tools that interact with core operational systems such as CRM, ERP, billing, and finance platforms. These workflows are often complex and involve multiple data sources, making them particularly sensitive to security gaps and breaches.

Because MCP operates within a client-server architecture, each interaction between an AI model and a tool represents a potential entry point. If authorization controls are weak or permissions are misconfigured, a single compromised tool call can propagate across systems. The challenge is that enterprise workflows are interconnected. One action often triggers several more, which means a small lapse in one part of the protocol chain can create large business consequences. MCP adds speed, but it can also amplify failure.

Revenue operations workflows

In revenue operations, AI agents may update CRM records, generate quotes, trigger billing workflows, create sales follow-ups, or enrich account data from external sources. These actions rely on accurate data sources and precise authorization controls. If an MCP tool is compromised, attackers could manipulate pricing, inject malicious data, trigger incorrect billing events, or expose sensitive pipeline information.

This becomes especially risky when multiple servers are connected to the same customer lifecycle. An agent may gather context from one system, use that context to update another, and then notify a third. If the initial context is manipulated or the permissions on one server are too broad, the full workflow can be corrupted.

Mitigating these risks requires enforcing strict permissions, validating every request, and monitoring for anomalies across all revenue-facing servers and protocols.

Commerce operations workflows

Commerce workflows often span eCommerce platforms, ERP systems, warehouse systems, shipping tools, and fulfillment networks. MCP enables AI agents to orchestrate these processes by invoking tools across multiple systems. However, this also increases the risk of data exfiltration and malicious interference if controls are not enforced consistently.

An attacker who gains access to a commerce-related server may be able to alter order statuses, reroute shipments, modify inventory updates, or exploit refund logic. Even when no theft occurs, the result can be costly operational disruption.

Isolating systems, using dedicated servers by use case, and applying consistent authentication policies help reduce the likelihood of breaches across interconnected environments. Similar attacks have shaped guidance from Microsoft and other enterprise vendors.

Finance operations

Finance workflows introduce additional sensitivity. AI agents may automate refunds, handle chargebacks, reconcile transactions, update payment systems, or route exceptions for review. These operations depend on secure access to financial data sources and strict authorization.

If attackers gain access to MCP-enabled finance tools, they could execute fraudulent transactions, alter records, or extract sensitive financial data. Finance teams need stronger controls than simple connectivity. Mitigating these risks requires layered controls, encrypted data handling, detection of unusual activity, and clear separation between read-only context retrieval and write-capable action execution. Microsoft-focused environments often apply similar control principles to payment and identity workflows.

MCP security controls and best practices

Addressing MCP security risks requires a structured approach that aligns with modern zero-trust principles. Organizations must design controls that account for how AI agents interact with systems, data sources, and users while mitigating evolving threats.

The goal is not to block innovation. It is to establish robust operating boundaries around the protocol, servers, and workflows.

For MCP, zero trust is not optional.

Implement strong authentication and OAuth best practices

Strong authentication is foundational. Organizations should enforce identity verification for all MCP interactions, integrating with a trusted identity provider to ensure consistent access control. Each request should be tied to a verified ID and validated continuously, not just at session start.

Enabling SSO through OIDC and requiring MFA adds additional layers of protection. OAuth best practices should include secure token handling, short-lived tokens, encrypted storage, and explicit validation by backend servers before privileged actions are executed.

These measures help prevent attackers from exploiting authentication gaps or reusing stolen tokens.

Enforce least privilege and scope minimization

Least privilege is critical in MCP environments. Only the necessary tools and data sources should be exposed to each AI model. For example, if your MCP server exposes your own custom APIs, ensure you’ve adhered to API security best practices. Fine-grained permissions ensure that agents can perform only the actions required.

Mitigating overexposure involves defining strict scopes, segmenting access, and continuously reviewing permissions. This reduces the risk of breaches caused by excessive access rights and helps contain malicious misuse when one server or workflow is compromised.

Isolate MCP endpoints by use case

Segmentation plays a key role in reducing risk. MCP endpoints and servers should be isolated based on use case, environment, and application context. Keeping systems isolated limits the impact of malicious activity.

Separating production and test environments and isolating high-risk workflows ensures that a compromise in one area does not affect others. This aligns with zero-trust principles and reduces overall exposure. It also makes detection easier because abnormal patterns are easier to spot when servers have narrower responsibilities.

Monitor and log all MCP interactions

Comprehensive monitoring is essential for maintaining a strong security posture. Organizations should log all MCP interactions, including prompts, inputs, outputs, tool choices, and execution traces.

Monitoring enables early detection of malicious behavior and supports incident response. Regular audits help identify anomalies and mitigate risks before they escalate into breaches. Teams should ensure that logs from servers, gateway layers, and external systems can be correlated for complete visibility.

Govern tool exposure through a managed control layer

Unmanaged MCP servers introduce significant risk. Organizations should avoid deploying MCP capabilities without centralized governance. Managed control layers and a policy-enforcing gateway help enforce authentication, authorization, and policy consistency.

Centralized governance also supports mitigating risks by providing visibility into all interactions and ensuring that only approved tools are accessible. This is especially important when multiple protocols, multiple servers, and multiple external systems are involved. It also helps standardize MCP oversight.

Establish user transparency and consent

Transparency is essential when AI agents act on behalf of users. Organizations should clearly define permissions and ensure that users understand what actions agents can take.

Maintaining audit trails, consent records, and oversight mechanisms helps prevent misuse and ensures accountability across MCP workflows. It also supports privacy requirements by showing how context was used, which servers received it, and what actions were taken.

The future of MCP security in enterprise AI systems

Enterprise AI is evolving rapidly. AI agents are shifting from passive assistants to active participants in business operations. As MCP adoption grows, organizations must adapt their security posture to address new challenges and mitigate increasingly sophisticated threats.

Securing MCP will require more than traditional API security. Enterprises will need centralized governance, fine-grained authorization, and continuous monitoring of agent activity. Zero-trust architectures will become the foundation for managing access across systems and data sources, especially as more servers expose business capabilities through standard protocols.

As LLMs and each AI model become more capable, attackers will also evolve their tactics. More malicious attacks will target the context layer, the protocol layer, and the servers that bridge AI with operational systems.

Microsoft and other major platform providers are already shaping how enterprises think about AI governance, identity, and secure tool access, but each organization still needs its own enforceable control model.

The future of MCP security will depend on making these interactions measurable, reviewable, and policy-driven. Microsoft guidance will influence many enterprise implementations, but MCP governance still has to be local and enforceable.

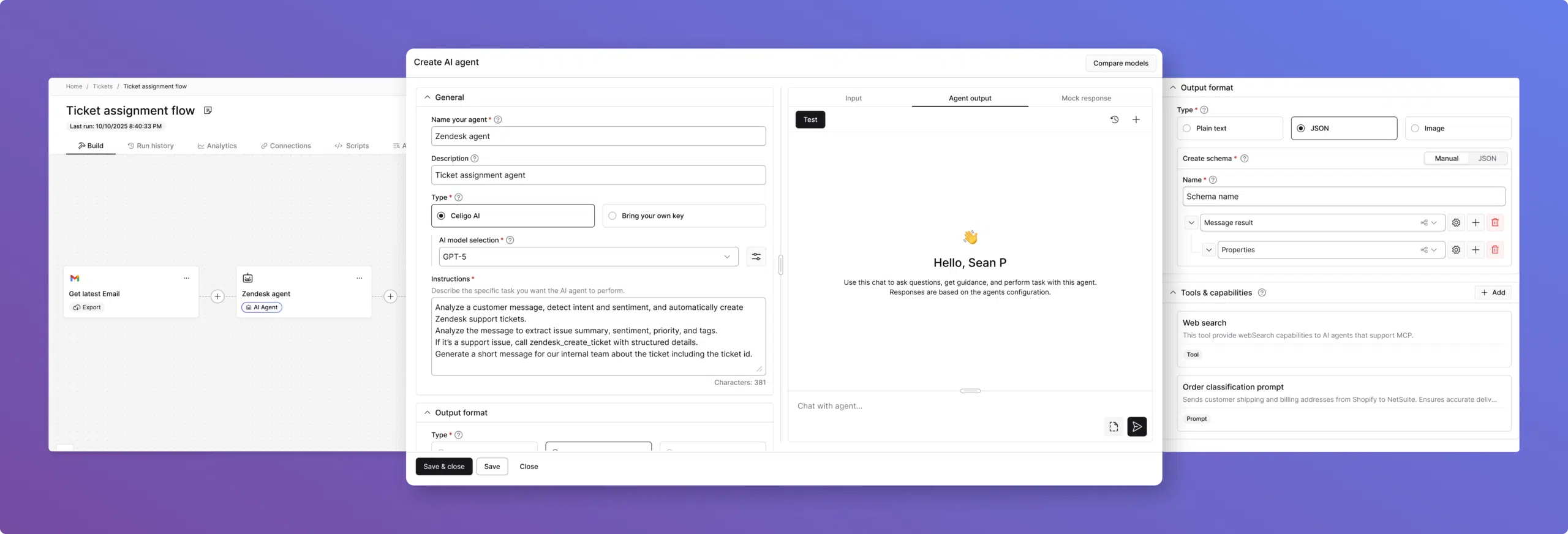

How Celigo helps secure MCP-enabled AI workflows

Celigo provides a secure orchestration layer between AI agents and enterprise systems, strengthening MCP deployments with centralized governance and control. While MCP defines how agents interact with tools, Celigo ensures those interactions are managed, monitored, and secured.

By enforcing consistent authentication and authorization policies, Celigo helps organizations maintain a strong security posture across all MCP workflows. It integrates with identity provider systems to standardize access control and reduce the risk of unauthorized actions across servers and protocols.

Celigo also enhances visibility. It monitors cross-system flows, captures execution data, and surfaces anomalies that may indicate malicious behavior or potential breaches. This level of observability is critical for mitigating risks at scale, improving detection, and maintaining privacy controls across connected environments.

As AI adoption accelerates, MCP security becomes a foundational requirement. A robust integration layer ensures that agent-driven workflows remain secure, auditable, and aligned with enterprise standards. It gives enterprises a controlled way to connect AI to external systems without relying on ad hoc servers or unmanaged protocols. For enterprises scaling MCP, that control layer matters.

→ Get a demo to see how Celigo can help you integrate and orchestrate AI workflows with security, governance, and control built in.